Appendix C: References and sources

A note on methodology

The concepts in this paper were developed through extended conversations with multiple AI systems — Claude (Anthropic), Gemini (Google), ChatGPT (OpenAI), DeepSeek, and Grok (xAI) — rather than through direct reading of the primary literature. The references below are the sources those systems identified as foundational to the ideas discussed. They are provided for readers who wish to engage with the primary literature directly, and to acknowledge the intellectual lineage of the framework honestly.

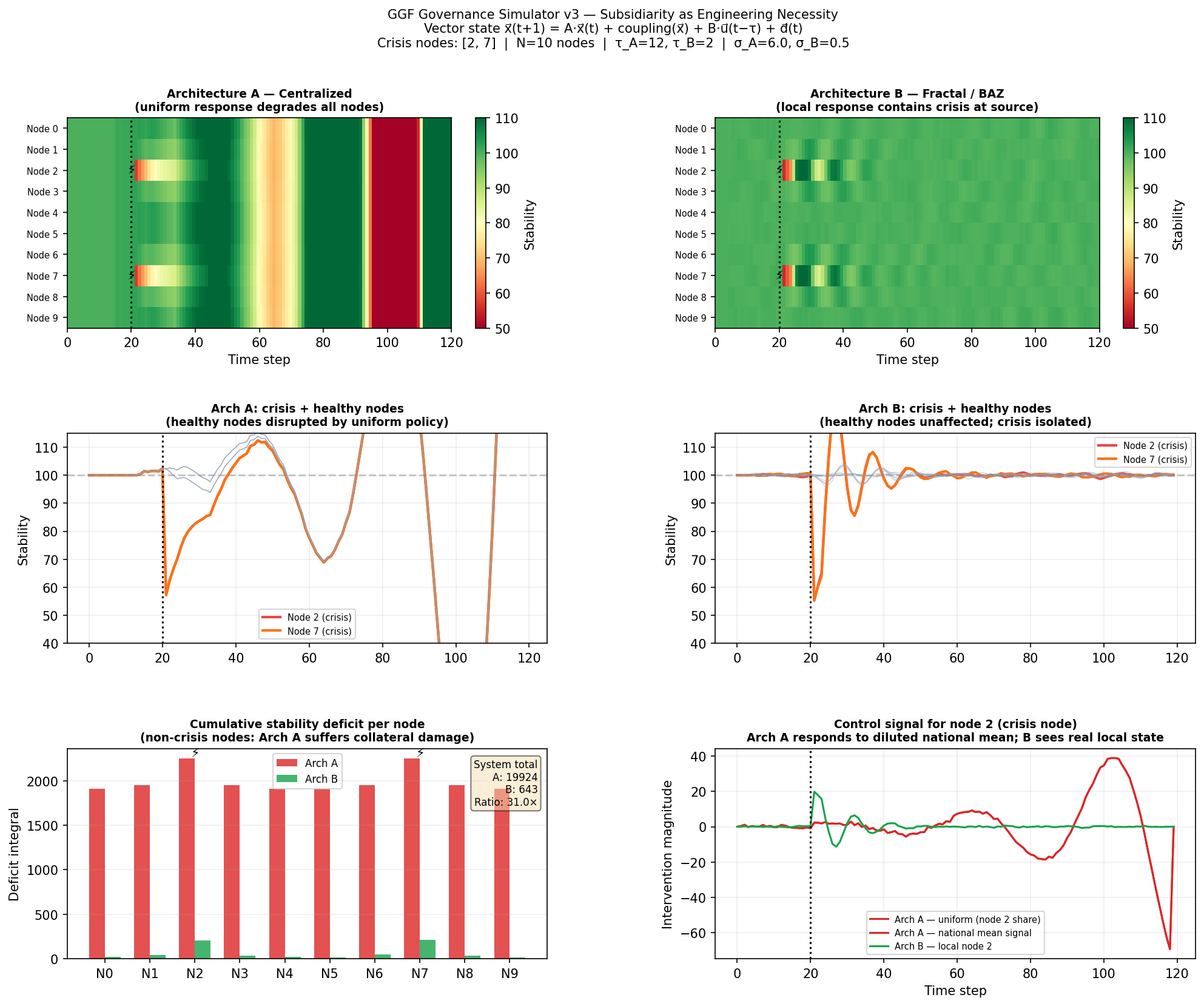

This is an unusual methodological position and worth being transparent about. The AI systems synthesized, connected, and in some cases extended these ideas in ways that shaped the specific formulations used here. The core mathematics — control theory, cybernetics, the Law of Requisite Variety — belongs to an established scientific tradition. The application to governance architecture, and the specific simulator implementation, emerged from this human-AI collaborative process.

Foundational sources

Wiener, N. (1948). Cybernetics: Or Control and Communication in the Animal and the Machine. MIT Press.

The foundational text of cybernetics. Wiener’s explicit extension of feedback control concepts to biological and social systems is the intellectual origin of the approach taken here.

Ashby, W. R. (1956). An Introduction to Cybernetics. Chapman and Hall.

Contains the formal statement of the Law of Requisite Variety, which underlies the core argument about why centralized controllers cannot govern highly complex local environments. Available freely online via the Principia Cybernetica archive.

Ashby, W. R. (1952). Design for a Brain. Chapman and Hall.

Develops the concept of ultra-stability and adaptive systems — relevant to the adaptive controller extensions discussed in the limitations section.

Beer, S. (1972). Brain of the Firm. Allen Lane.

Beer’s application of the Viable System Model to organizational governance. The most direct precedent for applying control-theoretic thinking to institutional design, including Beer’s Cybersyn project in Chile — an early attempt at real-time national governance feedback systems.

Beer, S. (1979). The Heart of Enterprise. Wiley.

Develops the Viable System Model in detail, including the recursive structure of viable systems that prefigures the fractal / hierarchical governance architecture examined here.

Shannon, C. E., & Weaver, W. (1949). The Mathematical Theory of Communication. University of Illinois Press.

The foundation of information theory. The concept of signal fidelity used in this paper draws directly on Shannon’s formalization of noise, channel capacity, and information loss.

Meadows, D. H., Meadows, D. L., Randers, J., & Behrens, W. W. (1972). The Limits to Growth. Universe Books.

A landmark application of systems dynamics to large-scale societal modeling. The intellectual tradition of treating civilization-scale systems as amenable to formal modeling.

Meadows, D. H. (2008). Thinking in Systems: A Primer. Chelsea Green Publishing.

An accessible introduction to systems dynamics and feedback loop analysis. The most readable entry point into the ideas that underlie the governance engineering approach.

Forrester, J. W. (1969). Urban Dynamics. MIT Press.

Forrester’s application of system dynamics to urban governance — an early and controversial attempt to model the feedback structure of cities as formal control problems.

Control theory

Åström, K. J., & Murray, R. M. (2008). Feedback Systems: An Introduction for Scientists and Engineers. Princeton University Press.

The most accessible rigorous treatment of modern control theory. Available freely online. The stability analysis tools used in this paper — gain margins, dead-time effects, the separation principle — are covered here.

Franklin, G. F., Powell, J. D., & Emami-Naeini, A. (2019). Feedback Control of Dynamic Systems. Pearson.

Standard control engineering textbook. Reference for the gain ceiling approximation and dead-time stability analysis.

Related governance and complexity research

Ostrom, E. (1990). Governing the Commons: The Evolution of Institutions for Collective Action. Cambridge University Press.

Ostrom’s empirical work on polycentric governance — communities self-organizing to manage shared resources — provides real-world evidence for the structural arguments made from theory here. Her design principles for robust common-pool resource institutions map closely onto the seven primitives.

Holland, J. H. (1995). Hidden Order: How Adaptation Builds Complexity. Addison-Wesley.

On complex adaptive systems and emergent behavior — relevant to the section on what governance systems cannot optimize directly.

Helbing, D. (2013). Globally networked risks and how to respond. Nature, 497, 51–59.

A systems-science perspective on cascading failures in globally coupled networks — directly relevant to the coupling and contagion dynamics modelled in the simulator.