Appendix C: References and sources

A note on methodology

As with the preceding papers in this series, the concepts here were developed through extended dialogue with multiple AI systems — Claude (Anthropic), ChatGPT (OpenAI), Gemini (Google), DeepSeek, and Grok (xAI) — rather than through direct reading of the primary literature. The references below are the sources those systems identified as foundational, provided for readers who wish to engage with the primary literature directly.

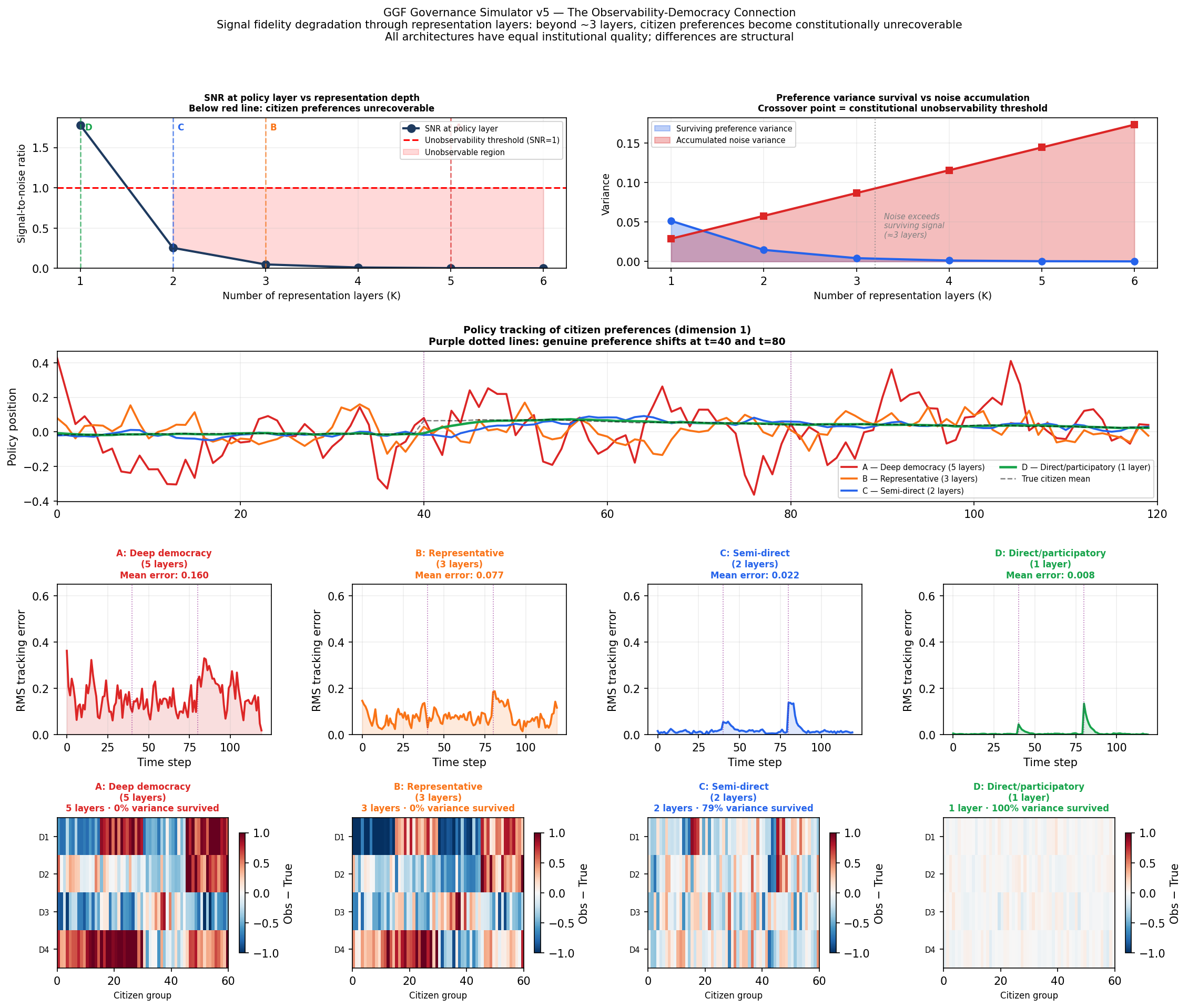

The specific contribution of this paper — formalizing democratic representation as a degraded observation channel, deriving the constitutional unobservability threshold, and demonstrating the preference-policy gap as an architectural consequence — emerged from this collaborative process. The underlying mathematical tools belong to long-established traditions in information theory and control theory. The political science empirics belong to an independent literature. This paper brings them together.

Information theory and observability

Shannon, C. E., & Weaver, W. (1949). The Mathematical Theory of Communication. University of Illinois Press.

The channel capacity theorem is the foundational result underlying this paper’s argument. Shannon’s demonstration that every communication channel has an information transmission rate bounded by its bandwidth and noise characteristics — and that this bound cannot be overcome by improving the communicators — is the precise formal basis for the constitutional unobservability result.

Cover, T. M., & Thomas, J. A. (2006). Elements of Information Theory. 2nd ed. Wiley.

The data processing inequality — that processing cannot increase mutual information — is formally derived and extensively discussed here. This inequality is the statement that no post-hoc institutional processing at the policy layer can recover information destroyed in representation chain aggregation.

Kalman, R. E. (1960). A new approach to linear filtering and prediction problems. Journal of Basic Engineering, 82(1), 35–45.

Kalman’s foundational paper establishes the observability condition for linear systems. The observability matrix construction referenced in Appendix A follows this tradition.

Åström, K. J., & Murray, R. M. (2008). Feedback Systems: An Introduction for Scientists and Engineers. Princeton University Press. (Available freely at cds.caltech.edu/~murray/amwiki)

Chapter 7 covers observability and observer design in the context accessible to scientists without a control engineering background. The formal observability condition and its implications for state reconstruction are developed clearly.

Democratic theory and preference transmission

Dahl, R. A. (1971). Polyarchy: Participation and Opposition. Yale University Press.

The foundational modern statement of democratic theory as preference transmission. Dahl’s criteria for polyarchy — including the responsiveness criterion that government policies correspond to citizen preferences — are the normative commitments whose technical feasibility this paper examines.

Bartels, L. M. (2008). Unequal Democracy: The Political Economy of the New Gilded Age. Princeton University Press.

Empirical documentation of the weak and declining correlation between citizen preferences and policy outcomes in the United States. Bartels attributes this primarily to elite influence and unequal political participation; the observability framework provides a complementary structural explanation.

Gilens, M., & Page, B. I. (2014). Testing theories of American politics: Elites, interest groups, and average citizens. Perspectives on Politics, 12(3), 564–581.

The most-cited empirical study of preference-policy correspondence. Finds that average citizens’ preferences have essentially zero independent influence on policy outcomes when controlling for elite preferences. The observability framework suggests this is partly architectural: by the time average citizen preferences reach the policy layer through a five-layer chain, they are noise-dominated and indistinguishable from the signal injected by organized interests with shorter chains.

Achen, C. H., & Bartels, L. M. (2016). Democracy for Realists: Why Elections Do Not Produce Responsive Government. Princeton University Press.

A systematic empirical challenge to the folk theory of democracy as preference aggregation. Argues that elections do not reliably transmit voter preferences to policy. The observability framework provides a formal explanation for why they cannot, independent of the retrospective voting and group identity arguments Achen and Bartels develop.

Dryzek, J. S. (2000). Deliberative Democracy and Beyond. Oxford University Press.

Deliberative democracy as a theoretical response to the aggregation problem — attempting to form preferences through reasoned discussion rather than aggregate pre-existing ones. The observability framework suggests deliberative mechanisms at lower layer counts (citizens deliberating directly about local decisions) preserve more signal than deliberative mechanisms inserted into a multi-layer representative chain.

Cybernetics and systems theory

Ashby, W. R. (1956). An Introduction to Cybernetics. Chapman and Hall.

The Law of Requisite Variety — a controller must have at least as much variety as the system it controls — is the general form of which the observability result is a specific case. A policy layer whose signal has been compressed through K aggregation stages has less variety than the citizen population it governs. Ashby’s law predicts it cannot govern them accurately; the observability model quantifies exactly why.

Wiener, N. (1948). Cybernetics: Or Control and Communication in the Animal and the Machine. MIT Press.

Wiener’s critique of bureaucracy as a communication system with too many filtering layers anticipates this paper’s central argument. His observation that information degrades through organizational hierarchies — that the effective decision-maker at the top of a large organization is working from a severely compressed and distorted signal — is a precursor to the formal model developed here.

Beer, S. (1979). The Heart of Enterprise. Wiley.

Beer’s treatment of the viable system’s System 4 (environmental scanning) and its relation to System 5 (policy) addresses precisely the question of how policy layers can obtain unfiltered information about the system they govern. Beer’s algedonic channel — the fast signal that bypasses the normal hierarchy in crisis — is a design response to the observability problem, though Beer does not formalize it in these terms.

Participatory and deliberative democracy

Fishkin, J. S. (2009). When the People Speak: Deliberative Democracy and Public Consultation. Oxford University Press.

The deliberative polling methodology as a practical mechanism for reducing representation layers: a random sample of citizens deliberates directly about policy questions, producing a more accurate signal than conventional polling or elections. Directly relevant to the Architecture C and D models.

Ostrom, E. (1990). Governing the Commons. Cambridge University Press.

Empirical evidence that communities can self-govern shared resources effectively at small scale, with minimal intermediation between citizens and rules. The observability framework provides a formal explanation: at small scale with minimal layers, citizen preferences are observable.

Landemore, H. (2020). Open Democracy: Reinventing Popular Rule for the Twenty-First Century. Princeton University Press.

The case for open, participatory democratic systems as responses to the representational failures of current institutions. The observability framework provides a technical grounding for the claim that representation at scale is architecturally problematic, independent of the normative arguments Landemore develops.

Political communication and media

Lippmann, W. (1922). Public Opinion. Harcourt, Brace.

The earliest systematic treatment of media as a filtering layer between citizens and the political world. Lippmann’s concept of the “pseudo-environment” — the simplified, selected representation of reality that citizens and politicians actually respond to — anticipates the noise-dominated signal problem this paper formalizes.

Prior, M. (2007). Post-Broadcast Democracy: How Media Choice Increases Inequality in Political Involvement and Polarizes Elections. Cambridge University Press.

The differentiated impact of media structure on political information transmission. The structural diversity of media environments affects the noise characteristics of the media layer — but the observability framework suggests that even ideal media transmission cannot compensate for the aggregation loss that occurs before the media layer and the further aggregation that occurs after it.